I have a new paper, coauthored with Ida Kubiszewski, Bob Costanza, and Luke Concollato, which investigates the development of the field of ecosystem services over the last decade since the founding of the journal Ecosystem Services. This is an open access publication – my first in a so-called hybrid journal. We used the University College London read and publish agreement with Elsevier to publish the paper. ANU now has a similar agreement starting in 2023.

The paper updates Ida and Bob's paper published in 2012: "The authorship structure of ‘‘ecosystem services’’ as a transdisciplinary field of scholarship". In this paper, we update and expand that analysis and compare results with those we found in the previous analysis. We also analyse the influence that the journal Ecosystem Services has had on the field over its first 10 years. We look at which articles have had the most influence on the field (as measured by the number of citations in Ecosystem Services) and on the broader scientific literature (as measured by total citations). We also look at how authorship networks, topics, and the types of journals publishing on the topic have changed.

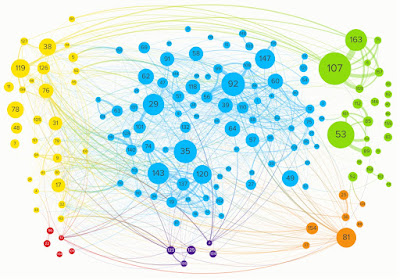

Not surprisingly, there has been significant growth in the number of authors (12,795 to 91,051) and number of articles published (4,948 to 33,973) on ecosystem services since 2012. Authorship networks have also expanded significantly, and the patterns of co-authorship have evolved in interesting ways. The most prolific authors are no longer in as tight clusters as they were 10 years ago.

The network chart shows the coauthorship relations among the 163 most prolific authors – those authors who have published more than 30 articles in the field. Colors indicate continent: Yellow = North America, red = South America, blue = Europe, purple – Africa, green = Asia, and orange = Oceania. The greatest number of authors is in Europe and they almost all collaborate with other top authors. Only in Asia and to a lesser degree North America are there top authors who do not collaborate with other top authors.

Costanza et al. (1997) is the most influential article in terms of citations in the journal Ecosystem Services and "Global estimates of the value of ecosystems andtheir services in monetary units" by de Groot et al. (2012) is now the most cited article published in Ecosystem Services.

Ecosystem Services is now the most prolific publisher of articles on ecosystem services among all the journals that have published in the area. There are nine journals that are both on the list of the 20 journals cited most often in Ecosystem Services and on the list of the top 20 journals cited by articles published in Ecosystem Services: Ecosystem Services, Ecological Economics, Ecological Indicators, Science of the Total Environment, Land Use Policy, Journal of Environmental Management, PLoS One, Ecology and Society, and Environmental Science & Policy.